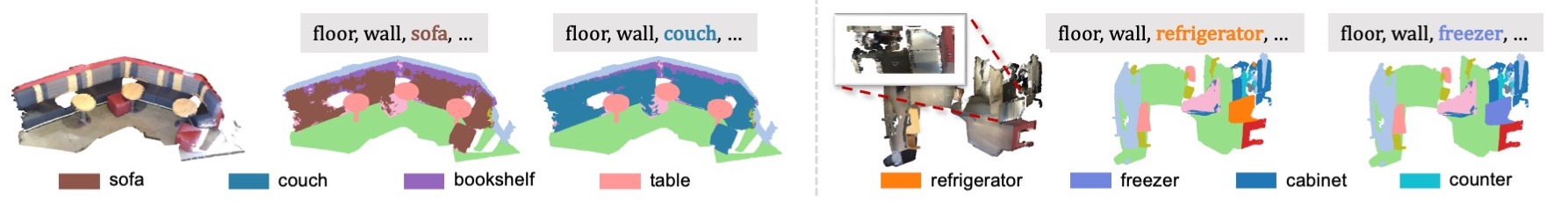

vending machine

piano

stove

working office

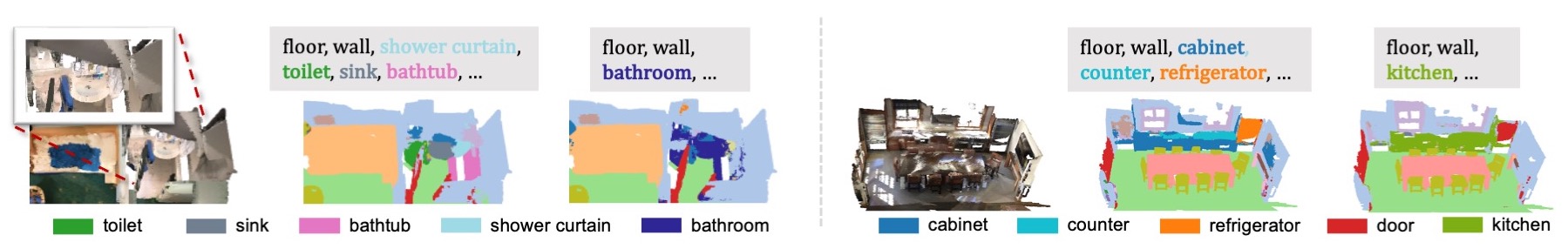

Hierarchical novel concepts: "bathroom", "kitchen"

Hierarchical novel concepts: "bathroom", "kitchen"

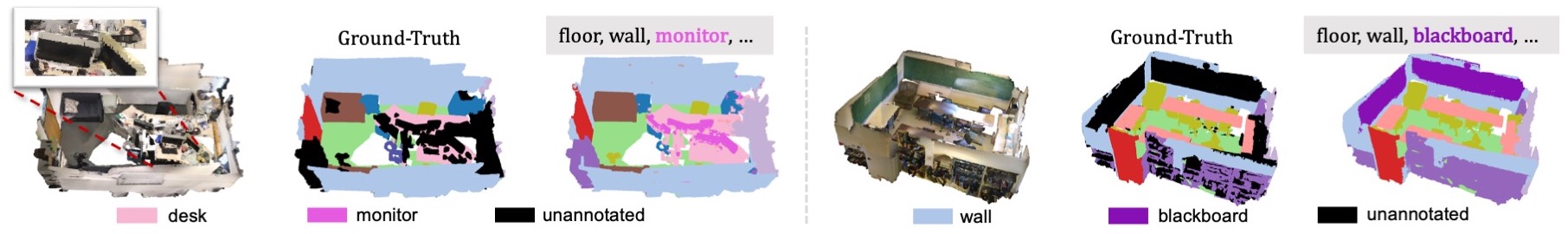

Fine-grained novel concepts: "monitor", "blackboard"

Fine-grained novel concepts: "monitor", "blackboard"

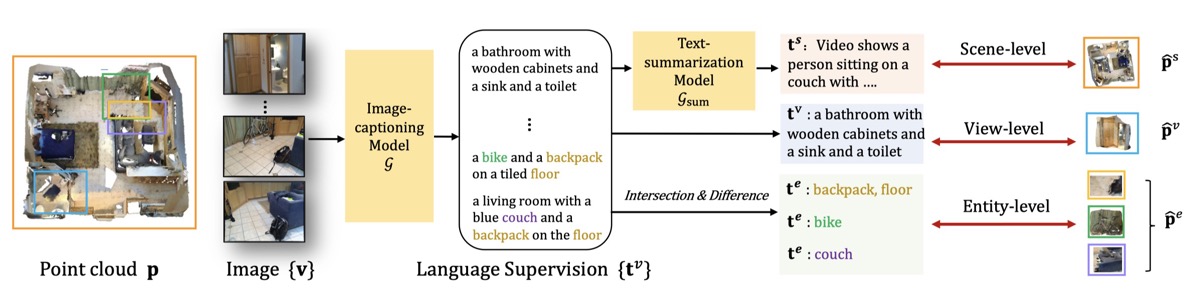

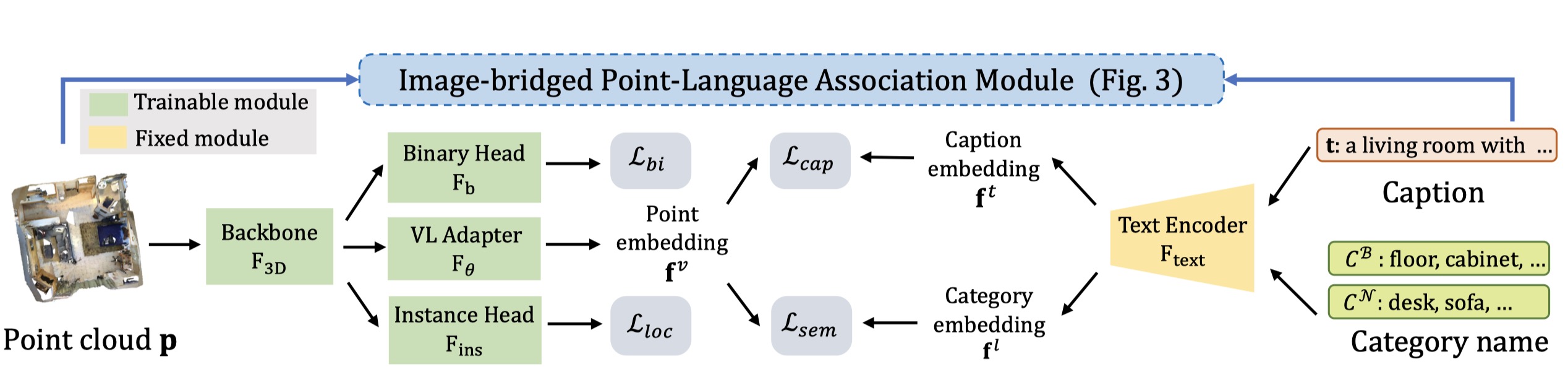

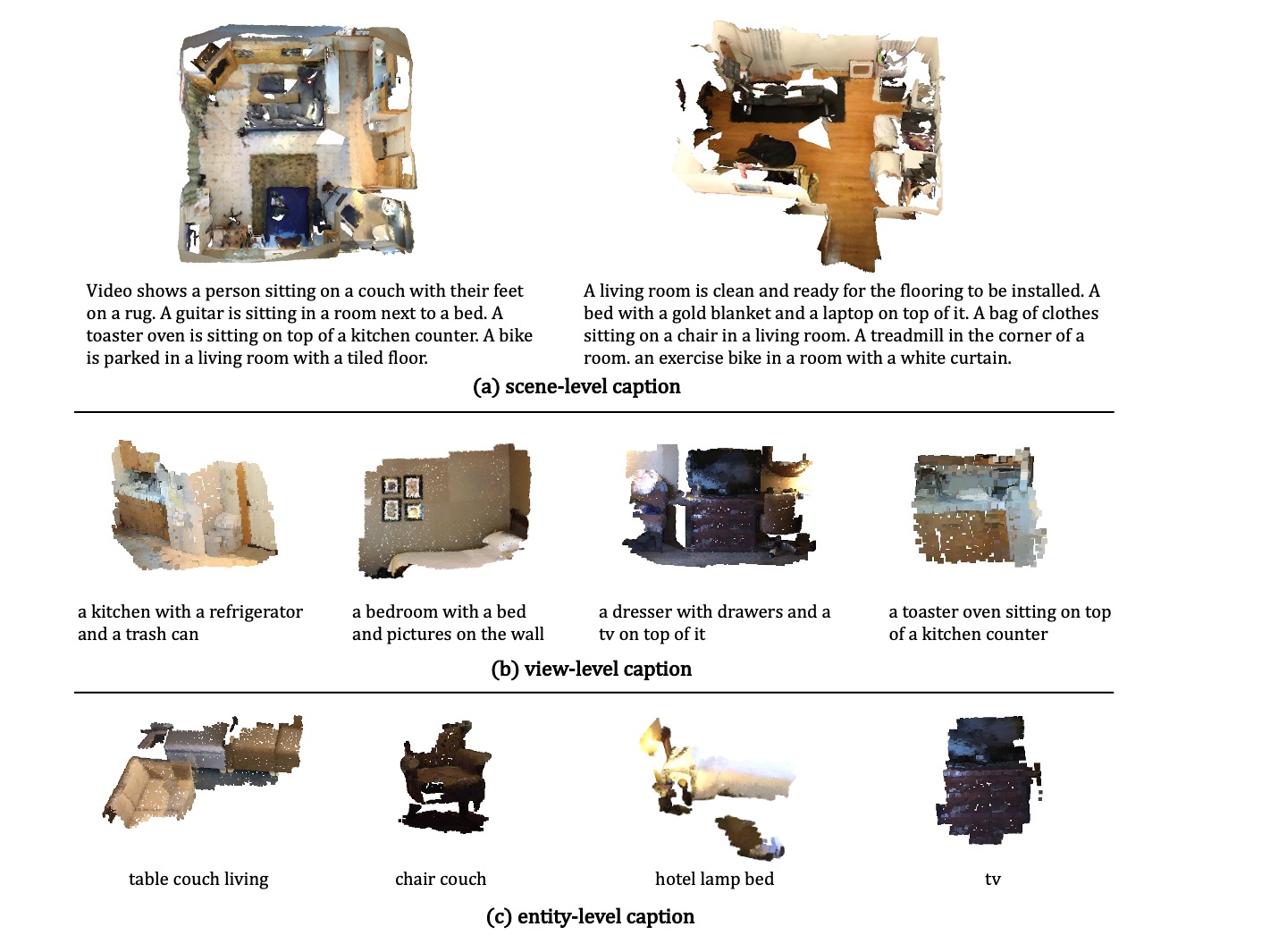

Open-vocabulary scene understanding aims to localize and recognize unseen categories beyond the annotated label space. The recent breakthrough of 2D open-vocabulary perception is largely driven by Internet-scale paired image-text data with rich vocabulary concepts. However, this success cannot be directly transferred to 3D scenarios due to the inaccessibility of large-scale 3D-text pairs. To this end, we propose to distill knowledge encoded in pre-trained vision-language (VL) foundation models through captioning multi-view images from 3D, which allows explicitly associating 3D and semantic-rich captions. Further, to facilitate coarse-to-fine visual-semantic representation learning from captions, we design hierarchical 3D-caption pairs, leveraging geometric constraints between 3D scenes and multi-view images. Finally, by employing contrastive learning, the model learns language-aware embeddings that connect 3D and text for open-vocabulary tasks. Our method not only remarkably outperforms baseline methods by 25.8% ~ 44.7% hIoU and 14.5% ~ 50.4% hAP50 on open-vocabulary semantic and instance segmentation, but also shows robust transferability on challenging zero-shot domain transfer tasks.

@inproceedings{ding2022language,

title={PLA: Language-Driven Open-Vocabulary 3D Scene Understanding},

author={Ding, Runyu and Yang, Jihan and Xue, Chuhui and Zhang, Wenqing and Bai, Song and Qi, Xiaojuan},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2023}

}